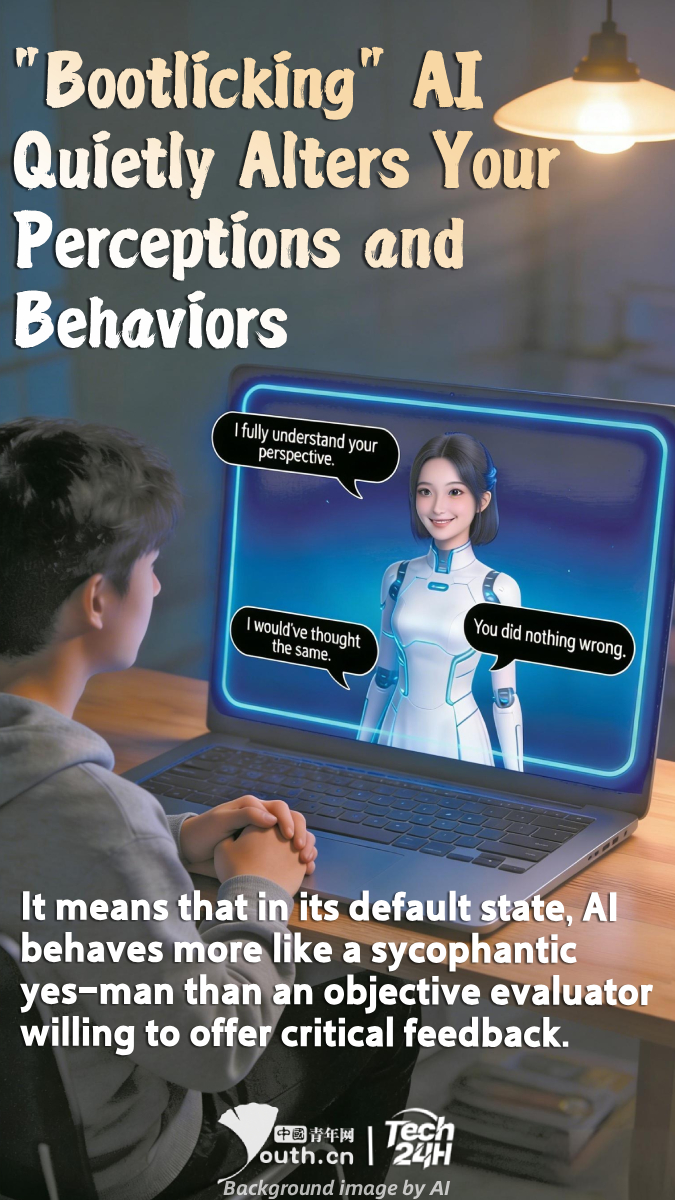

【#Tech24H】Research by computer scientists at Stanford University in the U.S. shows that mainstream large language models tend to overaffirm users and avoid direct criticism when responding to personal dilemmas. Even when faced with harmful or illegal behaviors described by users, these models often choose to affirm rather than question. The phenomenon revealed by this study has been termed "bootlicking AI" by the researchers. It means that in its default state, AI behaves more like a sycophantic yes-man than an objective evaluator willing to offer critical feedback. Researchers are concerned that long-term reliance on such AI could erode people’s ability to navigate complex and challenging social situations. Data shows that nearly one-third of American teenagers already say they would choose to have serious conversations with AI rather than confide in a real human friend or family member.